The Pseudoscience Super-Challenge: Helping students spot pseudoscientific claims

Posted May 10, 2017

By Rodney Schmaltz

One of the goals in my courses is to help students separate good information from bad, with a focus on ensuring that students can spot pseudoscientific claims. This can be extremely challenging. Even after a discussion of the difference between science and pseudoscience, I have had students say, “I understand that the research may not be there, but I’ve used homeopathy and my cold was gone almost immediately,” or “I know that most houses can’t be haunted, but I’ve seen something I’m sure even you can’t explain!" One of the biggest hurdles that instructors face is that many of students’ pseudoscientific beliefs are based on anecdotal, but meaningful experiences. For example, I have had several students in my classes claim to have witnessed some form of a ghostly apparition. From their descriptions, I am usually able to provide a counter explanation for their experience, such as the sightings being due to pareidolia or expectation (Shermer, 2011). If not handled properly, these counter explanations will not impact or change the student’s belief. It’s an interesting example of the bias-blind spot (Pronin, Lin, & Ross, 2002), where students recognize how others could fall prey to believing they have seen a ghost when in fact it was only an example of pareidolia, however, their ghost experience was different.

So what’s an instructor to do? Even the best students may have a belief in some form of pseudoscience (e.g., Impey, Buxner, & Antonellis, 2012). In fact, some research indicates that people with higher intelligence tend to be better at defending their own arguments and worse at accepting sound counterarguments. Apple co-founder Steve Jobs, for example, was a strong advocate of alternative medicine. On top of this, if discussions of pseudoscience are not handled correctly in the classroom, there can be a backfire effect (Lewandowsky et al., 2012). This means that the students will remember the example of pseudoscience, but will not remember that this claim is not supported by empirical evidence.

To combat the backfire effect, and to help promote good scientific thinking, one classroom activity that I have found highly effective is challenging students to find examples of pseudoscience on or near campus. I call this, the admittedly poorly named, “Pseudoscience Super-Challenge”. Students are asked to work in pairs and find an example of pseudoscience in under 30 minutes. For extra inspiration, I provide the winning group with the prize of a coffee or hot chocolate at the next class meeting. Students are allowed to leave the classroom to hunt for examples. Upon their return to class, each group of students provides a short description of what they have found, and why it is an example of pseudoscience. At the end of the lecture, students vote for the best example. Based on my personal experience, do not allow students to vote for themselves – this will lead to a tie in nearly every instance.

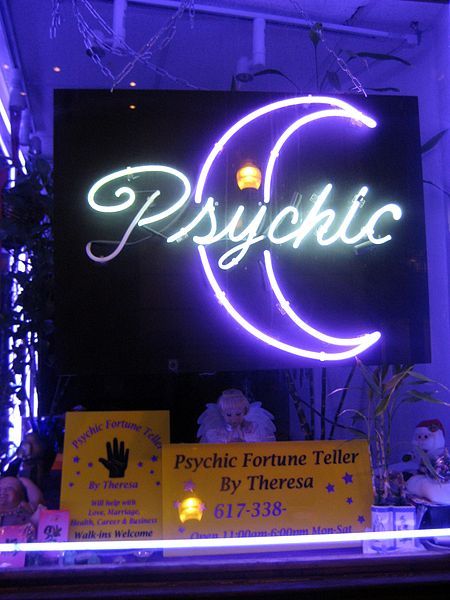

This activity is effective for several reasons. First, students are generally amazed at how quickly they can find an example of pseudoscience. The examples are typically found in books in the library, or in posters promoting questionable study tips such as speed reading. One pair of students went off campus and found a psychic nearby; others have found “medical” offices that offer energy healing, and one group even bought homeopathic pills from a local pharmacy.

- extraordinary claims without extraordinary evidence,

- a reliance on anecdotal evidence,

- and an absence of peer review (for a thorough list, see Schmaltz & Lilienfeld, 2014).

If an instructor were to describe a dubious healing technique, how it’s supposed to work, and then tell the students that this is an example of pseudoscience, students may remember the discussion of the healing technique, but forget that it is not empirically validated. Providing students with the hallmarks of pseudoscience and then challenging them to find an example places the focus on the warning signs of pseudoscience, rather than any specific type of pseudoscientific claim.

Students find the Pseudoscience Super Challenge highly engaging, and more importantly, it encourages them to consider the warning signs of pseudoscience (Lilienfeld et al., 2012). Having students present their example of pseudoscience and explain why it would classify as such, leads to fruitful class discussion and an opportunity for students to debate the nature of science versus pseudoscience.

If you are interested in trying this exercise in class, here’s a brief overview:

1. Review the warning signs of pseudoscience with your students. Here’s a link to an article by Scott Lilienfeld and myself on the warning signs: https://doi.org/10.3389/fpsyg.2014.00336. We briefly discuss the Pseudoscience Super-Challenge here, as well as other examples than can be used to promote scientific thinking in the classroom.

2. Assign students to work in pairs or larger groups depending on class size.

3. Allow students 30 minutes to find the best example of pseudoscience. I highly recommend having the prize of coffee for the students who provide the best example. It’s a surprisingly powerful motivator.

4. Once students return, allow each group 2 – 3 minutes to describe their example, and why it should be considered pseudoscience. Students will need to draw on the material earlier discussed in class and frame the example in terms of the warning signs of pseudoscience.

5. Following the presentations, allow the students to vote for the best example of pseudoscience.

6. As a follow-up, tell students to look for further examples before the next class. Challenge them to find a better example than the one that won the prize.

Students are often shocked to see how easy it is to find examples of pseudoscience. This activity is a fun way to get students thinking about the claims the see on a daily basis and to work on recognizing the warning signs of pseudoscience.

Bio

Rodney Schmaltz is an Associate Professor of Psychology at MacEwan University. His research focuses on pseudoscientific thinking, with an emphasis on strategies to promote and teach scientific skepticism.

References

Impey, C., Buxner, S., and Antonellis, J. (2012). Non-scientific beliefs among undergraduate students. Astronom. Educ. Rev.11:0111. doi: 10.3847/AER2012016

Lewandowsky, S., Ecker, U. K., Seifert, C. M., Schwarz, N., and Cook, J. (2012). Misinformation and its correction continued influence and successful debiasing. Psychol. Sci. Public Interest 13, 106–131. doi: 10.1177/1529100612451018

Lilienfeld, S. O., Ammirati, R., and David, M. (2012). Distinguishing science from pseudoscience in school psychology: science and scientific thinking as safeguards against human error. J. School Psychol. 50, 7–36. doi: 10.1016/j.jsp.2011.09.006

Pronin, E., Lin, D. Y., & Ross, L. (2002). The bias blind spot: Perceptions of bias in self versus others. Personality and Social Psychology Bulletin, 28(3), 369-381.

Schmaltz R and Lilienfeld SO (2014) Hauntings, homeopathy, and the Hopkinsville Goblins: using pseudoscience to teach scientific thinking. Front. Psychol. 5:336. doi: 10.3389/fpsyg.2014.00336

Shermer, M. (2011). The believing brain: From ghosts and gods to politics and conspiracies--how we construct beliefs and reinforce them as truths. New York: Times Books.