Multi-Modal Perception

By Lorin LachsCalifornia State University, Fresno

Most of the time, we perceive the world as a unified bundle of sensations from multiple sensory modalities. In other words, our perception is multimodal. This module provides an overview of multimodal perception, including information about its neurobiology and its psychological effects.

Learning Objectives

- Define the basic terminology and basic principles of multimodal perception.

- Describe the neuroanatomy of multisensory integration and name some of the regions of the cortex and midbrain that have been implicated in multisensory processing.

- Explain the difference between multimodal phenomena and crossmodal phenomena.

- Give examples of multimodal and crossmodal behavioral effects.

Perception: Unified

Although it has been traditional to study the various senses independently, most of the time, perception operates in the context of information supplied by multiple sensory modalities at the same time. For example, imagine if you witnessed a car collision. You could describe the stimulus generated by this event by considering each of the senses independently; that is, as a set of unimodal stimuli. Your eyes would be stimulated with patterns of light energy bouncing off the cars involved. Your ears would be stimulated with patterns of acoustic energy emanating from the collision. Your nose might even be stimulated by the smell of burning rubber or gasoline.

However, all of this information would be relevant to the same thing: your perception of the car collision. Indeed, unless someone was to explicitly ask you to describe your perception in unimodal terms, you would most likely experience the event as a unified bundle of sensations from multiple senses. In other words, your perception would be multimodal. The question is whether the various sources of information involved in this multimodal stimulus are processed separately by the perceptual system or not.

For the last few decades, perceptual research has pointed to the importance of multimodal perception: the effects on the perception of events and objects in the world that are observed when there is information from more than one sensory modality. Most of this research indicates that, at some point in perceptual processing, information from the various sensory modalities is integrated. In other words, the information is combined and treated as a unitary representation of the world.

Questions About Multimodal Perception

Several theoretical problems are raised by multimodal perception. After all, the world is a “blooming, buzzing world of confusion” that constantly bombards our perceptual system with light, sound, heat, pressure, and so forth. To make matters more complicated, these stimuli come from multiple events spread out over both space and time. To return to our example: Let’s say the car crash you observed happened on Main Street in your town. Your perception during the car crash might include a lot of stimulation that was not relevant to the car crash. For example, you might also overhear the conversation of a nearby couple, see a bird flying into a tree, or smell the delicious scent of freshly baked bread from a nearby bakery (or all three!). However, you would most likely not make the mistake of associating any of these stimuli with the car crash. In fact, we rarely combine the auditory stimuli associated with one event with the visual stimuli associated with another (although, under some unique circumstances—such as ventriloquism—we do). How is the brain able to take the information from separate sensory modalities and match it appropriately, so that stimuli that belong together stay together, while stimuli that do not belong together get treated separately? In other words, how does the perceptual system determine which unimodal stimuli must be integrated, and which must not?

Once unimodal stimuli have been appropriately integrated, we can further ask about the consequences of this integration: What are the effects of multimodal perception that would not be present if perceptual processing were only unimodal? Perhaps the most robust finding in the study of multimodal perception concerns this last question. No matter whether you are looking at the actions of neurons or the behavior of individuals, it has been found that responses to multimodal stimuli are typically greater than the combined response to either modality independently. In other words, if you presented the stimulus in one modality at a time and measured the response to each of these unimodal stimuli, you would find that adding them together would still not equal the response to the multimodal stimulus. This superadditive effect of multisensory integration indicates that there are consequences resulting from the integrated processing of multimodal stimuli.

The extent of the superadditive effect (sometimes referred to as multisensory enhancement) is determined by the strength of the response to the single stimulus modality with the biggest effect. To understand this concept, imagine someone speaking to you in a noisy environment (such as a crowded party). When discussing this type of multimodal stimulus, it is often useful to describe it in terms of its unimodal components: In this case, there is an auditory component (the sounds generated by the speech of the person speaking to you) and a visual component (the visual form of the face movements as the person speaks to you). In the crowded party, the auditory component of the person’s speech might be difficult to process (because of the surrounding party noise). The potential for visual information about speech—lipreading—to help in understanding the speaker’s message is, in this situation, quite large. However, if you were listening to that same person speak in a quiet library, the auditory portion would probably be sufficient for receiving the message, and the visual portion would help very little, if at all (Sumby & Pollack, 1954). In general, for a stimulus with multimodal components, if the response to each component (on its own) is weak, then the opportunity for multisensory enhancement is very large. However, if one component—by itself—is sufficient to evoke a strong response, then the opportunity for multisensory enhancement is relatively small. This finding is called the Principle of Inverse Effectiveness (Stein & Meredith, 1993) because the effectiveness of multisensory enhancement is inversely related to the unimodal response with the greatest effect.

Another important theoretical question about multimodal perception concerns the neurobiology that supports it. After all, at some point, the information from each sensory modality is definitely separated (e.g., light comes in through the eyes, and sound comes in through the ears). How does the brain take information from different neural systems (optic, auditory, etc.) and combine it? If our experience of the world is multimodal, then it must be the case that at some point during perceptual processing, the unimodal information coming from separate sensory organs—such as the eyes, ears, skin—is combined. A related question asks where in the brain this integration takes place. We turn to these questions in the next section.

Biological Bases of Multimodal Perception

Multisensory Neurons and Neural Convergence

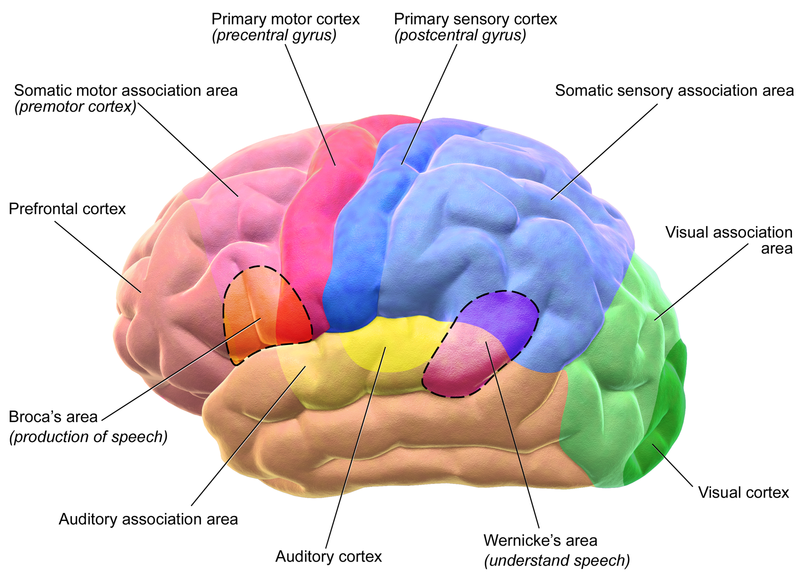

A surprisingly large number of brain regions in the midbrain and cerebral cortex are related to multimodal perception. These regions contain neurons that respond to stimuli from not just one, but multiple sensory modalities. For example, a region called the superior temporal sulcus contains single neurons that respond to both the visual and auditory components of speech (Calvert, 2001; Calvert, Hansen, Iversen, & Brammer, 2001). These multisensory convergence zones are interesting, because they are a kind of neural intersection of information coming from the different senses. That is, neurons that are devoted to the processing of one sense at a time—say vision or touch—send their information to the convergence zones, where it is processed together.

One of the most closely studied multisensory convergence zones is the superior colliculus (Stein & Meredith, 1993), which receives inputs from many different areas of the brain, including regions involved in the unimodal processing of visual and auditory stimuli (Edwards, Ginsburgh, Henkel, & Stein, 1979). Interestingly, the superior colliculus is involved in the “orienting response,” which is the behavior associated with moving one’s eye gaze toward the location of a seen or heard stimulus. Given this function for the superior colliculus, it is hardly surprising that there are multisensory neurons found there (Stein & Stanford, 2008).

Crossmodal Receptive Fields

The details of the anatomy and function of multisensory neurons help to answer the question of how the brain integrates stimuli appropriately. In order to understand the details, we need to discuss a neuron’s receptive field. All over the brain, neurons can be found that respond only to stimuli presented in a very specific region of the space immediately surrounding the perceiver. That region is called the neuron’s receptive field. If a stimulus is presented in a neuron’s receptive field, then that neuron responds by increasing or decreasing its firing rate. If a stimulus is presented outside of a neuron’s receptive field, then there is no effect on the neuron’s firing rate. Importantly, when two neurons send their information to a third neuron, the third neuron’s receptive field is the combination of the receptive fields of the two input neurons. This is called neural convergence, because the information from multiple neurons converges on a single neuron. In the case of multisensory neurons, the convergence arrives from different sensory modalities. Thus, the receptive fields of multisensory neurons are the combination of the receptive fields of neurons located in different sensory pathways.

Now, it could be the case that the neural convergence that results in multisensory neurons is set up in a way that ignores the locations of the input neurons’ receptive fields. Amazingly, however, these crossmodal receptive fields overlap. For example, a multisensory neuron in the superior colliculus might receive input from two unimodal neurons: one with a visual receptive field and one with an auditory receptive field. It has been found that the unimodal receptive fields refer to the same locations in space—that is, the two unimodal neurons respond to stimuli in the same region of space. Crucially, the overlap in the crossmodal receptive fields plays a vital role in the integration of crossmodal stimuli. When the information from the separate modalities is coming from within these overlapping receptive fields, then it is treated as having come from the same location—and the neuron responds with a superadditive (enhanced) response. So, part of the information that is used by the brain to combine multimodal inputs is the location in space from which the stimuli came.

This pattern is common across many multisensory neurons in multiple regions of the brain. Because of this, researchers have defined the spatial principle of multisensory integration: Multisensory enhancement is observed when the sources of stimulation are spatially related to one another. A related phenomenon concerns the timing of crossmodal stimuli. Enhancement effects are observed in multisensory neurons only when the inputs from different senses arrive within a short time of one another (e.g., Recanzone, 2003).

Multimodal Processing in Unimodal Cortex

Multisensory neurons have also been observed outside of multisensory convergence zones, in areas of the brain that were once thought to be dedicated to the processing of a single modality (unimodal cortex). For example, the primary visual cortex was long thought to be devoted to the processing of exclusively visual information. The primary visual cortex is the first stop in the cortex for information arriving from the eyes, so it processes very low-level information like edges. Interestingly, neurons have been found in the primary visual cortex that receives information from the primary auditory cortex (where sound information from the auditory pathway is processed) and from the superior temporal sulcus (a multisensory convergence zone mentioned above). This is remarkable because it indicates that the processing of visual information is, from a very early stage, influenced by auditory information.

There may be two ways for these multimodal interactions to occur. First, it could be that the processing of auditory information in relatively late stages of processing feeds back to influence low-level processing of visual information in unimodal cortex (McDonald, Teder-Sälejärvi, Russo, & Hillyard, 2003). Alternatively, it may be that areas of unimodal cortex contact each other directly (Driver & Noesselt, 2008; Macaluso & Driver, 2005), such that multimodal integration is a fundamental component of all sensory processing.

In fact, the large numbers of multisensory neurons distributed all around the cortex—in multisensory convergence areas and in primary cortices—has led some researchers to propose that a drastic reconceptualization of the brain is necessary (Ghazanfar & Schroeder, 2006). They argue that the cortex should not be considered as being divided into isolated regions that process only one kind of sensory information. Rather, they propose that these areas only prefer to process information from specific modalities but engage in low-level multisensory processing whenever it is beneficial to the perceiver (Vasconcelos et al., 2011).

Behavioral Effects of Multimodal Perception

Although neuroscientists tend to study very simple interactions between neurons, the fact that they’ve found so many crossmodal areas of the cortex seems to hint that the way we experience the world is fundamentally multimodal. As discussed above, our intuitions about perception are consistent with this; it does not seem as though our perception of events is constrained to the perception of each sensory modality independently. Rather, we perceive a unified world, regardless of the sensory modality through which we perceive it.

It will probably require many more years of research before neuroscientists uncover all the details of the neural machinery involved in this unified experience. In the meantime, experimental psychologists have contributed to our understanding of multimodal perception through investigations of the behavioral effects associated with it. These effects fall into two broad classes. The first class—multimodal phenomena—concerns the binding of inputs from multiple sensory modalities and the effects of this binding on perception. The second class—crossmodal phenomena—concerns the influence of one sensory modality on the perception of another (Spence, Senkowski, & Roder, 2009).

Multimodal Phenomena

Audiovisual Speech

Multimodal phenomena concern stimuli that generate simultaneous (or nearly simultaneous) information in more than one sensory modality. As discussed above, speech is a classic example of this kind of stimulus. When an individual speaks, she generates sound waves that carry meaningful information. If the perceiver is also looking at the speaker, then that perceiver also has access to visual patterns that carry meaningful information. Of course, as anyone who has ever tried to lipread knows, there are limits on how informative visual speech information is. Even so, the visual speech pattern alone is sufficient for very robust speech perception. Most people assume that deaf individuals are much better at lipreading than individuals with normal hearing. It may come as a surprise to learn, however, that some individuals with normal hearing are also remarkably good at lipreading (sometimes called “speechreading”). In fact, there is a wide range of speechreading ability in both normal hearing and deaf populations (Andersson, Lyxell, Rönnberg, & Spens, 2001). However, the reasons for this wide range of performance are not well understood (Auer & Bernstein, 2007; Bernstein, 2006; Bernstein, Auer, & Tucker, 2001; Mohammed et al., 2005).

How does visual information about speech interact with auditory information about speech? One of the earliest investigations of this question examined the accuracy of recognizing spoken words presented in a noisy context, much like in the example above about talking at a crowded party. To study this phenomenon experimentally, some irrelevant noise (“white noise”—which sounds like a radio tuned between stations) was presented to participants. Embedded in the white noise were spoken words, and the participants’ task was to identify the words. There were two conditions: one in which only the auditory component of the words was presented (the “auditory-alone” condition), and one in both the auditory and visual components were presented (the “audiovisual” condition). The noise levels were also varied, so that on some trials, the noise was very loud relative to the loudness of the words, and on other trials, the noise was very soft relative to the words. Sumby and Pollack (1954) found that the accuracy of identifying the spoken words was much higher for the audiovisual condition than it was in the auditory-alone condition. In addition, the pattern of results was consistent with the Principle of Inverse Effectiveness: The advantage gained by audiovisual presentation was highest when the auditory-alone condition performance was lowest (i.e., when the noise was loudest). At these noise levels, the audiovisual advantage was considerable: It was estimated that allowing the participant to see the speaker was equivalent to turning the volume of the noise down by over half. Clearly, the audiovisual advantage can have dramatic effects on behavior.

Another phenomenon using audiovisual speech is a very famous illusion called the “McGurk effect” (named after one of its discoverers). In the classic formulation of the illusion, a movie is recorded of a speaker saying the syllables “gaga.” Another movie is made of the same speaker saying the syllables “baba.” Then, the auditory portion of the “baba” movie is dubbed onto the visual portion of the “gaga” movie. This combined stimulus is presented to participants, who are asked to report what the speaker in the movie said. McGurk and MacDonald (1976) reported that 98 percent of their participants reported hearing the syllable “dada”—which was in neither the visual nor the auditory components of the stimulus. These results indicate that when visual and auditory information about speech is integrated, it can have profound effects on perception.

Tactile/Visual Interactions in Body Ownership

Not all multisensory integration phenomena concern speech, however. One particularly compelling multisensory illusion involves the integration of tactile and visual information in the perception of body ownership. In the “rubber hand illusion” (Botvinick & Cohen, 1998), an observer is situated so that one of his hands is not visible. A fake rubber hand is placed near the obscured hand, but in a visible location. The experimenter then uses a light paintbrush to simultaneously stroke the obscured hand and the rubber hand in the same locations. For example, if the middle finger of the obscured hand is being brushed, then the middle finger of the rubber hand will also be brushed. This sets up a correspondence between the tactile sensations (coming from the obscured hand) and the visual sensations (of the rubber hand). After a short time (around 10 minutes), participants report feeling as though the rubber hand “belongs” to them; that is, that the rubber hand is a part of their body. This feeling can be so strong that surprising the participant by hitting the rubber hand with a hammer often leads to a reflexive withdrawing of the obscured hand—even though it is in no danger at all. It appears, then, that our awareness of our own bodies may be the result of multisensory integration.

Crossmodal Phenomena

Crossmodal phenomena are distinguished from multimodal phenomena in that they concern the influence one sensory modality has on the perception of another.

Visual Influence on Auditory Localization

A famous (and commonly experienced) crossmodal illusion is referred to as “the ventriloquism effect.” When a ventriloquist appears to make a puppet speak, she fools the listener into thinking that the location of the origin of the speech sounds is at the puppet’s mouth. In other words, instead of localizing the auditory signal (coming from the mouth of a ventriloquist) to the correct place, our perceptual system localizes it incorrectly (to the mouth of the puppet).

Why might this happen? Consider the information available to the observer about the location of the two components of the stimulus: the sounds from the ventriloquist’s mouth and the visual movement of the puppet’s mouth. Whereas it is very obvious where the visual stimulus is coming from (because you can see it), it is much more difficult to pinpoint the location of the sounds. In other words, the very precise visual location of mouth movement apparently overrides the less well-specified location of the auditory information. More generally, it has been found that the location of a wide variety of auditory stimuli can be affected by the simultaneous presentation of a visual stimulus (Vroomen & De Gelder, 2004). In addition, the ventriloquism effect has been demonstrated for objects in motion: The motion of a visual object can influence the perceived direction of motion of a moving sound source (Soto-Faraco, Kingstone, & Spence, 2003).

Auditory Influence on Visual Perception

A related illusion demonstrates the opposite effect: where sounds have an effect on visual perception. In the double flash illusion, a participant is asked to stare at a central point on a computer monitor. On the extreme edge of the participant’s vision, a white circle is briefly flashed one time. There is also a simultaneous auditory event: either one beep or two beeps in rapid succession. Remarkably, participants report seeing two visual flashes when the flash is accompanied by two beeps; the same stimulus is seen as a single flash in the context of a single beep or no beep (Shams, Kamitani, & Shimojo, 2000). In other words, the number of heard beeps influences the number of seen flashes!

Another illusion involves the perception of collisions between two circles (called “balls”) moving toward each other and continuing through each other. Such stimuli can be perceived as either two balls moving through each other or as a collision between the two balls that then bounce off each other in opposite directions. Sekuler, Sekuler, and Lau (1997) showed that the presentation of an auditory stimulus at the time of contact between the two balls strongly influenced the perception of a collision event. In this case, the perceived sound influences the interpretation of the ambiguous visual stimulus.

Crossmodal Speech

Several crossmodal phenomena have also been discovered for speech stimuli. These crossmodal speech effects usually show altered perceptual processing of unimodal stimuli (e.g., acoustic patterns) by virtue of prior experience with the alternate unimodal stimulus (e.g., optical patterns). For example, Rosenblum, Miller, and Sanchez (2007) conducted an experiment examining the ability to become familiar with a person’s voice. Their first interesting finding was unimodal: Much like what happens when someone repeatedly hears a person speak, perceivers can become familiar with the “visual voice” of a speaker. That is, they can become familiar with the person’s speaking style simply by seeing that person speak. Even more astounding was their crossmodal finding: Familiarity with this visual information also led to increased recognition of the speaker’s auditory speech, to which participants had never had exposure.

Similarly, it has been shown that when perceivers see a speaking face, they can identify the (auditory-alone) voice of that speaker, and vice versa (Kamachi, Hill, Lander, & Vatikiotis-Bateson, 2003; Lachs & Pisoni, 2004a, 2004b, 2004c; Rosenblum, Smith, Nichols, Lee, & Hale, 2006). In other words, the visual form of a speaker engaged in the act of speaking appears to contain information about what that speaker should sound like. Perhaps more surprisingly, the auditory form of speech seems to contain information about what the speaker should look like.

Conclusion

In this module, we have reviewed some of the main evidence and findings concerning the role of multimodal perception in our experience of the world. It appears that our nervous system (and the cortex in particular) contains considerable architecture for the processing of information arriving from multiple senses. Given this neurobiological setup, and the diversity of behavioral phenomena associated with multimodal stimuli, it is likely that the investigation of multimodal perception will continue to be a topic of interest in the field of experimental perception for many years to come.

Outside Resources

- Article: A review of the neuroanatomy and methods associated with multimodal perception:

- http://dx.doi.org/10.1016/j.neubiorev.2011.04.015

- Journal: Experimental Brain Research Special issue: Crossmodal processing

- http://www.springerlink.com/content/0014-4819/198/2-3

- TED Talk: Optical Illusions

- http://www.ted.com/talks/beau_lotto_optical_illusions_show_how_we_see

- Video: McGurk demo

- Video: The Rubber Hand Illusion

- Web: Double-flash illusion demo

- http://www.cns.atr.jp/~kmtn/soundInducedIllusoryFlash2/

Discussion Questions

- The extensive network of multisensory areas and neurons in the cortex implies that much perceptual processing occurs in the context of multiple inputs. Could the processing of unimodal information ever be useful? Why or why not?

- Some researchers have argued that the Principle of Inverse Effectiveness (PoIE) results from ceiling effects: Multisensory enhancement cannot take place when one modality is sufficient for processing because in such cases it is not possible for processing to be enhanced (because performance is already at the “ceiling”). On the other hand, other researchers claim that the PoIE stems from the perceptual system’s ability to assess the relative value of stimulus cues, and to use the most reliable sources of information to construct a representation of the outside world. What do you think? Could these two possibilities ever be teased apart? What kinds of experiments might one conduct to try to get at this issue?

- In the late 17th century, a scientist named William Molyneux asked the famous philosopher John Locke a question relevant to modern studies of multisensory processing. The question was this: Imagine a person who has been blind since birth, and who is able, by virtue of the sense of touch, to identify three dimensional shapes such as spheres or pyramids. Now imagine that this person suddenly receives the ability to see. Would the person, without using the sense of touch, be able to identify those same shapes visually? Can modern research in multimodal perception help answer this question? Why or why not? How do the studies about crossmodal phenomena inform us about the answer to this question?

Vocabulary

- Bouncing balls illusion

- The tendency to perceive two circles as bouncing off each other if the moment of their contact is accompanied by an auditory stimulus.

- Crossmodal phenomena

- Effects that concern the influence of the perception of one sensory modality on the perception of another.

- Crossmodal receptive field

- A receptive field that can be stimulated by a stimulus from more than one sensory modality.

- Crossmodal stimulus

- A stimulus with components in multiple sensory modalties that interact with each other.

- Double flash illusion

- The false perception of two visual flashes when a single flash is accompanied by two auditory beeps.

- Integrated

- The process by which the perceptual system combines information arising from more than one modality.

- McGurk effect

- An effect in which conflicting visual and auditory components of a speech stimulus result in an illusory percept.

- Multimodal

- Of or pertaining to multiple sensory modalities.

- Multimodal perception

- The effects that concurrent stimulation in more than one sensory modality has on the perception of events and objects in the world.

- Multimodal phenomena

- Effects that concern the binding of inputs from multiple sensory modalities.

- Multisensory convergence zones

- Regions in the brain that receive input from multiple unimodal areas processing different sensory modalities.

- Multisensory enhancement

- See “superadditive effect of multisensory integration.”

- Primary auditory cortex

- A region of the cortex devoted to the processing of simple auditory information.

- Primary visual cortex

- A region of the cortex devoted to the processing of simple visual information.

- Principle of Inverse Effectiveness

- The finding that, in general, for a multimodal stimulus, if the response to each unimodal component (on its own) is weak, then the opportunity for multisensory enhancement is very large. However, if one component—by itself—is sufficient to evoke a strong response, then the effect on the response gained by simultaneously processing the other components of the stimulus will be relatively small.

- Receptive field

- The portion of the world to which a neuron will respond if an appropriate stimulus is present there.

- Rubber hand illusion

- The false perception of a fake hand as belonging to a perceiver, due to multimodal sensory information.

- Sensory modalities

- A type of sense; for example, vision or audition.

- Spatial principle of multisensory integration

- The finding that the superadditive effects of multisensory integration are observed when the sources of stimulation are spatially related to one another.

- Superadditive effect of multisensory integration

- The finding that responses to multimodal stimuli are typically greater than the sum of the independent responses to each unimodal component if it were presented on its own.

- Unimodal

- Of or pertaining to a single sensory modality.

- Unimodal components

- The parts of a stimulus relevant to one sensory modality at a time.

- Unimodal cortex

- A region of the brain devoted to the processing of information from a single sensory modality.

References

- Andersson, U., Lyxell, B., Rönnberg, J., & Spens, K.-E. (2001). Cognitive correlates of visual speech understanding in hearing-impaired individuals. Journal of Deaf Studies and Deaf Education, 6(2), 103–116. doi: 10.1093/deafed/6.2.103

- Auer, E. T., Jr., & Bernstein, L. E. (2007). Enhanced visual speech perception in individuals with early-onset hearing impairment. Journal of Speech, Language, and Hearing Research, 50(5), 1157–1165. doi: 10.1044/1092-4388(2007/080)

- Bernstein, L. E. (2006). Visual speech perception. In E. Vatikiotis-Bateson, G. Bailley, & P. Perrier (Eds.), Audio-visual speech processing. Cambridge, MA: MIT Press.

- Bernstein, L. E., Auer, E. T., Jr., & Tucker, P. E. (2001). Enhanced speechreading in deaf adults: Can short-term training/practice close the gap for hearing adults? Journal of Speech, Language, and Hearing Research, 44, 5–18.

- Botvinick, M., & Cohen, J. (1998). Rubber hands /`feel/' touch that eyes see. [10.1038/35784]. Nature, 391(6669), 756–756.

- Calvert, G. A. (2001). Crossmodal processing in the human brain: Insights from functional neuroimaging studies. Cerebral Cortex, 11, 1110–1123.

- Calvert, G. A., Hansen, P. C., Iversen, S. D., & Brammer, M. J. (2001). Detection of audio-visual integration sites in humans by application of electrophysiological criteria to the bold effect. NeuroImage, 14(2), 427–438. doi: 10.1006/nimg.2001.0812

- Driver, J., & Noesselt, T. (2008). Multisensory interplay reveals crossmodal influences on ‘sensory-specific’ brain regions, neural responses, and judgments. Neuron, 57(1), 11–23. doi: 10.1016/j.neuron.2007.12.013

- Edwards, S. B., Ginsburgh, C. L., Henkel, C. K., & Stein, B. E. (1979). Sources of subcortical projections to the superior colliculus in the cat. Journal of Comparative Neurology, 184(2), 309–329. doi: 10.1002/cne.901840207

- Ghazanfar, A. A., & Schroeder, C. E. (2006). Is neocortex essentially multisensory? TRENDS in Cognitive Sciences, 10(6), 278-285. doi: 10.1016/j.tics.2006.04.008

- Kamachi, M., Hill, H., Lander, K., & Vatikiotis-Bateson, E. (2003). "Putting the face to the voice": Matching identity across modality. Current Biology, 13, 1709–1714.

- Lachs, L., & Pisoni, D. B. (2004a). Crossmodal source identification in speech perception. Ecological Psychology, 16(3), 159–187.

- Lachs, L., & Pisoni, D. B. (2004b). Crossmodal source information and spoken word recognition. Journal of Experimental Psychology: Human Perception & Performance, 30(2), 378–396.

- Lachs, L., & Pisoni, D. B. (2004c). Specification of crossmodal source information in isolated kinematic displays of speech. Journal of the Acoustical Society of America, 116(1), 507–518.

- Macaluso, E., & Driver, J. (2005). Multisensory spatial interactions: A window onto functional integration in the human brain. Trends in Neurosciences, 28(5), 264–271. doi: 10.1016/j.tins.2005.03.008

- McDonald, J. J., Teder-Sälejärvi, W. A., Russo, F. D., & Hillyard, S. A. (2003). Neural substrates of perceptual enhancement by cross-modal spatial attention. Journal of Cognitive Neuroscience, 15(1), 10–19. doi: 10.1162/089892903321107783

- McGurk, H., & MacDonald, J. (1976). Hearing lips and seeing voices. Nature, 264, 746–748.

- Mohammed, T., Campbell, R., MacSweeney, M., Milne, E., Hansen, P., & Coleman, M. (2005). Speechreading skill and visual movement sensitivity are related in deaf speechreaders. Perception, 34(2), 205–216.

- Recanzone, G. H. (2003). Auditory influences on visual temporal rate perception. Journal of Neurophysiology, 89(2), 1078–1093. doi: 10.1152/jn.00706.2002

- Rosenblum, L. D., Miller, R. M., & Sanchez, K. (2007). Lip-read me now, hear me better later: Cross-modal transfer of talker-familiarity effects. Psychological Science, 18(5), 392–396.

- Rosenblum, L. D., Smith, N. M., Nichols, S. M., Lee, J., & Hale, S. (2006). Hearing a face: Cross-modal speaker matching using isolated visible speech. Perception & Psychophysics, 68, 84–93.

- Sekuler, R., Sekuler, A. B., & Lau, R. (1997). Sound alters visual motion perception. [10.1038/385308a0]. Nature, 385(6614), 308–308.

- Shams, L., Kamitani, Y., & Shimojo, S. (2000). Illusions. What you see is what you hear. Nature, 408(6814), 788. doi: 10.1038/35048669

- Soto-Faraco, S., Kingstone, A., & Spence, C. (2003). Multisensory contributions to the perception of motion. Neuropsychologia, 41(13), 1847–1862. doi: 10.1016/s0028-3932(03)00185-4

- Spence, C., Senkowski, D., & Roder, B. (2009). Crossmodal processing. [Editorial Introductory]. Exerimental Brain Research, 198(2-3), 107–111. doi: 10.1007/s00221-009-1973–4

- Stein, B. E., & Meredith, M. A. (1993). The merging of the senses. Cambridge, MA: The MIT Press.

- Stein, B. E., & Stanford, T. R. (2008). Multisensory integration: Current issues from the perspective of the single neuron. [10.1038/nrn2331]. Nature Reviews Neuroscience, 9(4), 255–266.

- Sumby, W. H., & Pollack, I. (1954). Visual contribution of speech intelligibility in noise. Journal of the Acoustical Society of America, 26, 212–215.

- Vasconcelos, N., Pantoja, J., Belchior, H., Caixeta, F. V., Faber, J., Freire, M. A. M., . . . Ribeiro, S. (2011). Cross-modal responses in the primary visual cortex encode complex objects and correlate with tactile discrimination. Proceedings of the National Academy of Sciences, 108(37), 15408–15413. doi: 10.1073/pnas.1102780108

- Vroomen, J., & De Gelder, B. (2004). Perceptual effects of cross-modal stimulation: Ventriloquism and the freezing phenomenon. In G. A. Calvert, C. Spence, & B. E. Stein (Eds.), Handbook of multisensory processes. Cambridge, MA: MIT Press.

Authors

Lorin LachsLorin Lachs, PhD is a Professor of Psychology at California State University, Fresno. His research concerns multisensory perception in speech and virtual reality. His interests include the history and philosophy of cognitive science, the neural basis of consciousness, video gaming and playing with his three children.

Lorin LachsLorin Lachs, PhD is a Professor of Psychology at California State University, Fresno. His research concerns multisensory perception in speech and virtual reality. His interests include the history and philosophy of cognitive science, the neural basis of consciousness, video gaming and playing with his three children.

Creative Commons License

Multi-Modal Perception by Lorin Lachs is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License. Permissions beyond the scope of this license may be available in our Licensing Agreement.

Multi-Modal Perception by Lorin Lachs is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License. Permissions beyond the scope of this license may be available in our Licensing Agreement.