Vision

By Simona Buetti and Alejandro LlerasUniversity of Illinois at Urbana-Champaign

Vision is the sensory modality that transforms light into a psychological experience of the world around you, with minimal bodily effort. This module provides an overview of the most significant steps in this transformation and strategies that your brain uses to achieve this visual understanding of the environment.

Learning Objectives

- Describe how the eye transforms light information into neural energy.

- Understand what sorts of information the brain is interested in extracting from the environment and why it is useful.

- Describe how the visual system has adapted to deal with different lighting conditions.

- Understand the value of having two eyes.

- Understand why we have color vision.

- Understand the interdependence between vision and other brain functions.

What Is Vision?

Think about the spectacle of a starry night. You look up at the sky, and thousands of photons from distant stars come crashing into your retina, a light-sensitive structure at the back of your eyeball. These photons are millions of years old and have survived a trip across the universe, only to run into one of your photoreceptors. Tough luck: in one thousandth of a second, this little bit of light energy becomes the fuel to a photochemical reaction known as photoactivation. The light energy becomes neural energy and triggers a cascade of neural activity that, a few hundredths of a second later, will result in your becoming aware of that distant star. You and the universe united by photons. That is the amazing power of vision. Light brings the world to you. Without moving, you know what’s out there. You can recognize friends coming to meet you before you are able to hear them coming, ripe fruits from green ones on trees without having to taste them and before reaching out to grab them. You can also tell how quickly a ball is moving in your direction (Will it hit you? Can you hit it?).

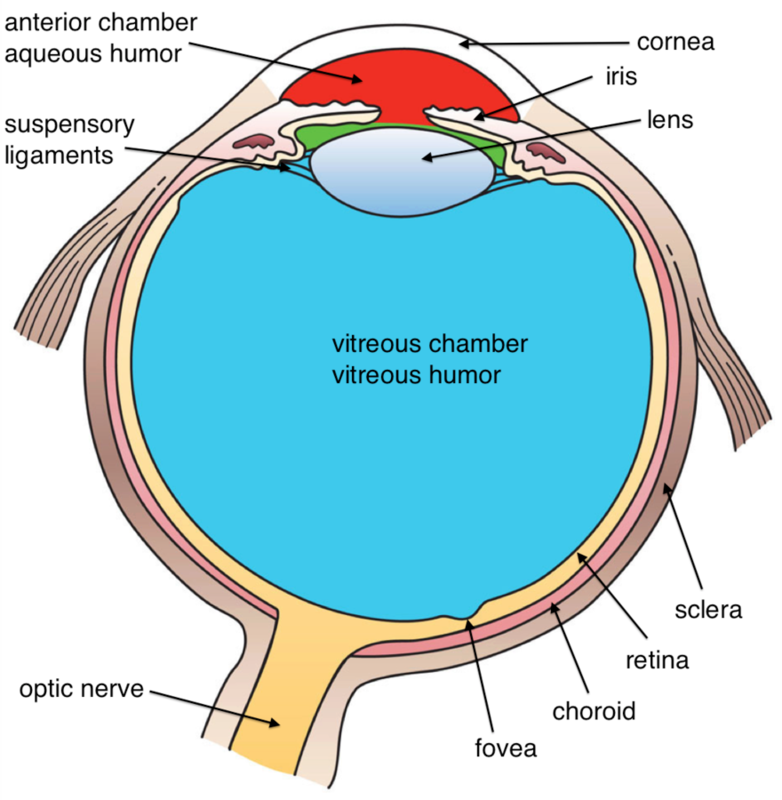

How does all of that happen? First, light enters the eyeball through a tiny hole known as the pupil and, thanks to the refractive properties of your cornea and lens, this light signal gets projected sharply into the retina (see Outside Resources for links to a more detailed description of the eye structure). There, light is transduced into neural energy by about 200 million photoreceptor cells.

This is where the information carried by the light about distant objects and colors starts being encoded by our brain. There are two different types of photoreceptors: rods and cones. The human eye contains more rods than cones. Rods give us sensitivity under dim lighting conditions and allow us to see at night. Cones allow us to see fine details in bright light and give us the sensation of color. Cones are tightly packed around the fovea (the central region of the retina behind your pupil) and more sparsely elsewhere. Rods populate the periphery (the region surrounding the fovea) and are almost absent from the fovea.

But vision is far more complex than just catching photons. The information encoded by the photoreceptors undergoes a rapid and continuous set of ever more complex analysis so that, eventually, you can make sense of what’s out there. At the fovea, visual information is encoded separately from tiny portions of the world (each about half the width of a human hair viewed at arm’s length) so that eventually the brain can reconstruct in great detail fine visual differences from locations at which you are directly looking. This fine level of encoding requires lots of light and it is slow going (neurally speaking). In contrast, in the periphery, there is a different encoding strategy: detail is sacrificed in exchange for sensitivity. Information is summed across larger sections of the world. This aggregation occurs quickly and allows you to detect dim signals under very low levels of light, as well as detect sudden movements in your peripheral vision.

The Importance of Contrast

What happens next? Well, you might think that the eye would do something like record the amount of light at each location in the world and then send this information to the visual-processing areas of the brain (an astounding 30% of the cortex is influenced by visual signals!). But, in fact, that is not what eyes do. As soon as photoreceptors capture light, the nervous system gets busy analyzing differences in light, and it is these differences that get transmitted to the brain. The brain, it turns out, cares little about the overall amount of light coming from a specific part of the world, or in the scene overall. Rather, it wants to know: does the light coming from this one point differ from the light coming from the point next to it? Place your hand on the table in front of you. The contour of your hand is actually determined by the difference in light—the contrast—between the light coming from the skin in your hand and the light coming from the table underneath. To find the contour of your hand, we simply need to find the regions in the image where the difference in light between two adjacent points is maximal. Two points on your skin will reflect similar levels of light back to you, as will two points on the table. On the other hand, two points that fall on either side of the boundary contour between your hand and the table will reflect very different light.

The fact that the brain is interested in coding contrast in the world reveals something deeply important about the forces that drove the evolution of our brain: encoding the absolute amount of light in the world tells us little about what is out there. But if your brain can detect the sudden appearance of a difference in light somewhere in front of you, then it must be that something new is there. That contrast signal is information. That information may represent something that you like (food, a friend) or something dangerous approaching (a tiger, a cliff). The rest of your visual system will work hard to determine what that thing is, but as quickly as 10ms after light enters your eyes, ganglion cells in your retinae have already encoded all the differences in light from the world in front of you.

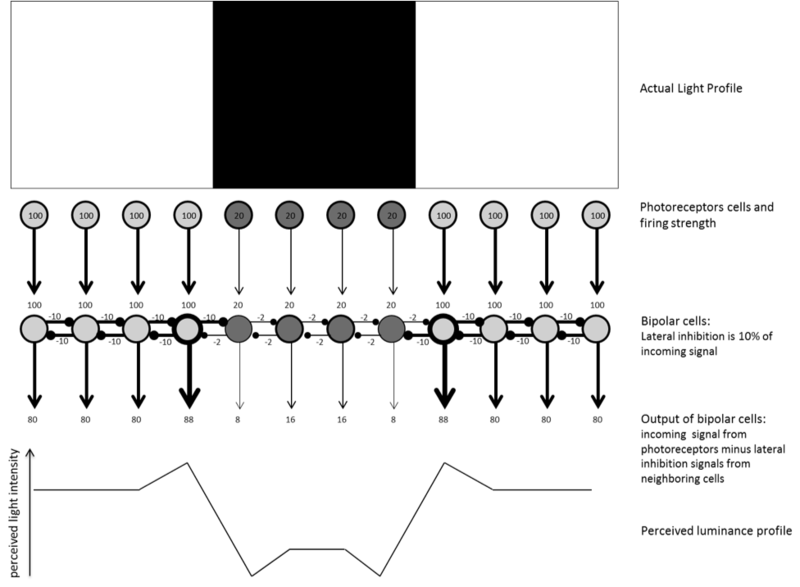

Contrast is so important that your neurons go out of their way not only to encode differences in light but to exaggerate those differences for you, lest you miss them. Neurons achieve this via a process known as lateral inhibition. When a neuron is firing in response to light, it produces two signals: an output signal to pass on to the next level in vision, and a lateral signal to inhibit all neurons that are next to it. This makes sense on the assumption that nearby neurons are likely responding to the same light coming from nearby locations, so this information is somewhat redundant. The magnitude of the lateral inhibitory signal a neuron produces is proportional to the excitatory input that neuron receives: the more a neuron fires, the stronger the inhibition it produces. Figure 1 illustrates how lateral inhibition amplifies contrast signals at the edges of surfaces.

Sensitivity to Different Light Conditions

Let’s think for a moment about the range of conditions in which your visual system must operate day in and day out. When you take a walk outdoors on a sunny day, as many as billions of photons enter your eyeballs every second. In contrast, when you wake up in the middle of the night in a dark room, there might be as little as a few hundred photons per second entering your eyes. To deal with these extremes, the visual system relies on the different properties of the two types of photoreceptors. Rods are mostly responsible for processing light when photons are scarce (just a single photon can make a rod fire!), but it takes time to replenish the visual pigment that rods require for photoactivation. So, under bright conditions, rods are quickly bleached (Stuart & Brige, 1996) and cannot keep up with the constant barrage of photons hitting them. That’s when the cones become useful. Cones require more photons to fire and, more critically, their photopigments replenish much faster than rods’ photopigments, allowing them to keep up when photons are abundant.

What happens when you abruptly change lighting conditions? Under bright light, your rods are bleached. When you move into a dark environment, it will take time (up to 30 minutes) before they chemically recover (Hurley, 2002). Once they do, you will begin to see things around you that initially you could not. This phenomenon is called dark adaptation. When you go from dark to bright light (as you exit a tunnel on a highway, for instance), your rods will be bleached in a blaze and you will be blinded by the sudden light for about 1 second. However, your cones are ready to fire! Their firing will take over and you will quickly begin to see at this higher level of light.

A similar, but more subtle, adjustment occurs when the change in lighting is not so drastic. Think about your experience of reading a book at night in your bed compared to reading outdoors: the room may feel to you fairly well illuminated (enough so you can read) but the light bulbs in your room are not producing the billions of photons that you encounter outside. In both cases, you feel that your experience is that of a well-lit environment. You don’t feel one experience as millions of times brighter than the other. This is because vision (as much of perception) is not proportional: seeing twice as many photons does not produce a sensation of seeing twice as bright a light. The visual system tunes into the current experience by favoring a range of contrast values that is most informative in that environment (Gardner et al., 2005). This is the concept of contrast gain: the visual system determines the mean contrast in a scene and represents values around that mean contrast best, while ignoring smaller contrast differences. (See the Outside Resources section for a demonstration.)

The Reconstruction Process

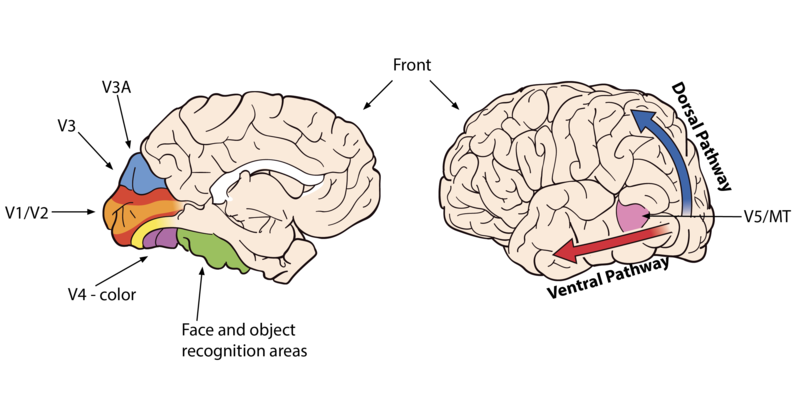

What happens once information leaves your eyes and enters the brain? Neurons project first into the thalamus, in a section known as the lateral geniculate nucleus. The information then splits and projects towards two different parts of the brain. Most of the computations regarding reflexive eye movements are computed in subcortical regions, the evolutionarily older part of the brain. Reflexive eye movements allow you to quickly orient your eyes towards areas of interest and to track objects as they move. The more complex computations, those that eventually allow you to have a visual experience of the world, all happen in the cortex, the evolutionarily newer region of the brain. The first stop in the cortex is at the primary visual cortex (also known as V1). Here, the “reconstruction” process begins in earnest: based on the contrast information arriving from the eyes, neurons will start computing information about color and simple lines, detecting various orientations and thicknesses. Small-scale motion signals are also computed (Hubel & Wiesel, 1962).

As information begins to flow towards other “higher” areas of the system, more complex computations are performed. For example, edges are assigned to the object to which they belong, backgrounds are separated from foregrounds, colors are assigned to surfaces, and the global motion of objects is computed. Many of these computations occur in specialized brain areas. For instance, an area called MT processes global-motion information; the parahippocampal place area identifies locations and scenes; the fusiform face area specializes in identifying objects for which fine discriminations are required, like faces. There is even a brain region specialized in letter and word processing. These visual-recognition areas are located along the ventral pathway of the brain (also known as the What pathway). Other brain regions along the dorsal pathway (or Where-and-How pathway) will compute information about self- and object-motion, allowing you to interact with objects, navigate the environment, and avoid obstacles (Goodale and Milner, 1992).

Now that you have a basic understanding of how your visual system works, you can ask yourself the question: why do you have two eyes? Everything that we discussed so far could be computed with information coming from a single eye. So why two? Looking at the animal kingdom gives us a clue. Animals who tend to be prey have eyes located on opposite sides of their skull. This allows them to detect predators whenever one appears anywhere around them. Humans, like most predators, have two eyes pointing in the same direction, encoding almost the exact scene twice. This redundancy gives us a binocular advantage: having two eyes not only provides you with two chances at catching a signal in front of you, but the minute difference in perspective that you get from each eye is used by your brain to reconstruct the sense of three-dimensional space. You can get an estimate of how far distant objects are from you, their size, and their volume. This is no easy feat: the signal in each eye is a two-dimensional projection of the world, like two separate pictures drawn upon your retinae. Yet, your brain effortlessly provides you with a sense of depth by combining those two signals. This 3-D reconstruction process also relies heavily on all the knowledge you acquired through experience about spatial information. For instance, your visual system learns to interpret how the volume, distance, and size of objects change as they move closer or farther from you. (See the Outside Resources section for demonstrations.)

The Experience of Color

Perhaps one of the most beautiful aspects of vision is the richness of the color experience that it provides us. One of the challenges that we have as scientists is to understand why the human color experience is what it is. Perhaps you have heard that dogs only have 2 types of color photoreceptors, whereas humans have 3, chickens have 4, and mantis shrimp have 16. Why is there such variation across species? Scientists believe each species has evolved with different needs and uses color perception to signal information about food, reproduction, and health that are unique to their species. For example, humans have a specific sensitivity that allows you to detect slight changes in skin tone. You can tell when someone is embarrassed, aroused, or ill. Detecting these subtle signals is adaptive in a social species like ours.

How is color coded in the brain? The two leading theories of color perception were proposed in the mid-19th century, about 100 years before physiological evidence was found to corroborate them both (Svaetichin, 1956). Trichromacy theory, proposed by Young (1802) and Helmholtz (1867), proposed that the eye had three different types of color-sensitive cells based on the observation that any one color can be reproduced by combining lights from three lamps of different hue. If you can adjust separately the intensity of each light, at some point you will find the right combination of the three lights to match any color in the world. This principle is used today on TVs, computer screens, and any colored display. If you look closely enough at a pixel, you will find that it is composed of a blue, a red, and a green light, of varying intensities. Regarding the retina, humans have three types of cones: S-cones, M-cones, and L-cones (also known as blue, green, and red cones, respectively) that are sensitive to three different wavelengths of light.

Around the same time, Hering made a puzzling discovery: some colors are impossible to create. Whereas you can make yellowish greens, bluish reds, greenish blues, and reddish yellows by combining two colors, you can never make a reddish green or a bluish yellow. This observation led Hering (1892) to propose the Opponent Process theory of color: color is coded via three opponent channels (red-green, blue-yellow, and black-white). Within each channel, a comparison is constantly computed between the two elements in the pair. In other words, colors are encoded as differences between two hues and not as simple combinations of hues. Again, what matters to the brain is contrast. When one element is stronger than the other, the stronger color is perceived and the weaker one is suppressed. You can experience this phenomenon by following the link below.

http://nobaproject.com/assets/modules/module-visio...

When both colors in a pair are present to equal extents, the color perception is canceled and we perceive a level of grey. This is why you cannot see a reddish green or a bluish yellow: they cancel each other out. By the way, if you are wondering where the yellow signal comes from, it turns out that it is computed by averaging the M- and L-cone signals. Are these colors uniquely human colors? Some think that they are: the red-green contrast, for example, is finely tuned to detect changes in human skin tone so you can tell when someone blushes or becomes pale. So, the next time you go out for a walk with your dog, look at the sunset and ask yourself, what color does my dog see? Probably none of the orange hues you do!

So now, you can ask yourself the question: do all humans experience color in the same way? Color-blind people, as you can imagine, do not see all the colors that the rest of us see, and this is due to the fact that they lack one (or more) cones in their retina. Incidentally, there are a few women who actually have four different sets of cones in their eyes, and recent research suggests that their experience of color can be (but not always is) richer than the one from three-coned people. A slightly different question, though, is whether all three-coned people have the same internal experiences of colors: is the red inside your head the same red inside your mom’s head? That is an almost impossible question to answer that has been debated by philosophers for millennia, yet recent data suggests that there might in fact be cultural differences in the way we perceive color. As it turns out, not all cultures categorize colors in the same way, for example. And some groups “see” different shades of what we in the Western world would call the “same” color, as categorically different colors. The Berinmo tribe in New Guinea, for instance, appear to experience green shades that denote leaves that are alive as belonging to an entirely different color category than the sort of green shades that denote dying leaves. Russians, too, appear to experience light and dark shades of blue as different categories of colors, in a way that most Westerners do not. Further, current brain imaging research suggests that people’s brains change (increase in white-matter volume) when they learn new color categories! These are intriguing and suggestive findings, for certain, that seem to indicate that our cultural environment may in fact have some (small) but definite impact on how people use and experience colors across the globe.

Integration with Other Modalities

Vision is not an encapsulated system. It interacts with and depends on other sensory modalities. For example, when you move your head in one direction, your eyes reflexively move in the opposite direction to compensate, allowing you to maintain your gaze on the object that you are looking at. This reflex is called the vestibulo-ocular reflex. It is achieved by integrating information from both the visual and the vestibular system (which knows about body motion and position). You can experience this compensation quite simply. First, while you keep your head still and your gaze looking straight ahead, wave your finger in front of you from side to side. Notice how the image of the finger appears blurry. Now, keep your finger steady and look at it while you move your head from side to side. Notice how your eyes reflexively move to compensate the movement of your head and how the image of the finger stays sharp and stable. Vision also interacts with your proprioceptive system, to help you find where all your body parts are, and with your auditory system, to help you understand the sounds people make when they speak. You can learn more about this in the Noba module about multimodal perception (http://noba.to/cezw4qyn).

Finally, vision is also often implicated in a blending-of-sensations phenomenon known as synesthesia. Synesthesia occurs when one sensory signal gives rise to two or more sensations. The most common type is grapheme-color synesthesia. About 1 in 200 individuals experience a sensation of color associated with specific letters, numbers, or words: the number 1 might always be seen as red, the number 2 as orange, etc. But the more fascinating forms of synesthesia blend sensations from entirely different sensory modalities, like taste and color or music and color: the taste of chicken might elicit a sensation of green, for example, and the timbre of violin a deep purple.

Concluding Remarks

We are at an exciting moment in our scientific understanding of vision. We have just begun to get a functional understanding of the visual system. It is not sufficiently evolved for us to recreate artificial visual systems (i.e., we still cannot make robots that “see” and understand light signals as we do), but we are getting there. Just recently, major breakthroughs in vision science have allowed researchers to significantly improve retinal prosthetics: photosensitive circuits that can be implanted on the back of the eyeball of blind people that connect to visual areas of the brain and have the capacity to partially restore a “visual experience” to these patients (Nirenberg & Pandarinath, 2012). And using functional magnetic brain imaging, we can now “decode” from your brain activity the images that you saw in your dreams while you were asleep (Horikawa, Tamaki, Miyawaki, & Kamitani, 2013)! Yet, there is still so much more to understand. Consider this: if vision is a construction process that takes time, whatever we see now is no longer what is front of us. Yet, humans can do amazing time-sensitive feats like hitting a 90-mph fastball in a baseball game. It appears then that a fundamental function of vision is not just to know what is happening around you now, but actually to make an accurate inference about what you are about to see next (Enns & Lleras, 2008), so that you can keep up with the world. Understanding how this future-oriented, predictive function of vision is achieved in the brain is probably the next big challenge in this fascinating realm of research.

Outside Resources

- Video: Acquired knowledge and its impact on our three-dimensional interpretation of the world - 3D Street Art

- Video: Acquired knowledge and its impact on our three-dimensional interpretation of the world - Anamorphic Illusions

- Video: Acquired knowledge and its impact on our three-dimensional interpretation of the world - Optical Illusion

- Web: Amazing library with visual phenomena and optical illusions, explained

- http://michaelbach.de/ot/index.html

- Web: Anatomy of the eye

- https://www.aao.org/eye-health/anatomy/parts-of-eye

- Web: Demonstration of contrast gain adaptation

- https://michaelbach.de/ot/lum-contrastAdapt/

- Web: Demonstration of illusory contours and lateral inhibition. Mach bands

- http://michaelbach.de/ot/lum-MachBands/index.html

- Web: Demonstration of illusory contrast and lateral inhibition. The Hermann grid

- http://michaelbach.de/ot/lum_herGrid/

- Web: Further information regarding what and where/how pathways

- http://www.scholarpedia.org/article/What_and_where_pathways

Discussion Questions

- When running in the dark, it is recommended that you never look straight at the ground. Why? What would be a better strategy to avoid obstacles?

- The majority of ganglion cells in the eye specialize in detecting drops in the amount of light coming from a given location. That is, they increase their firing rate when they detect less light coming from a specific location. Why might the absence of light be more important than the presence of light? Why would it be evolutionarily advantageous to code this type of information?

- There is a hole in each one of your eyeballs called the optic disk. This is where veins enter the eyeball and where neurons (the axons of the ganglion cells) exit the eyeball. Why do you not see two holes in the world all the time? Close one eye now. Why do you not see a hole in the world now? To “experience” a blind spot, follow the instructions in this website: http://michaelbach.de/ot/cog_blindSpot/index.html

- Imagine you were given the task of testing the color-perception abilities of a newly discovered species of monkeys in the South Pacific. How would you go about it?

- An important aspect of emotions is that we sense them in ourselves much in the same way as we sense other perceptions like vision. Can you think of an example where the concept of contrast gain can be used to understand people’s responses to emotional events?

Vocabulary

- Binocular advantage

- Benefits from having two eyes as opposed to a single eye.

- Cones

- Photoreceptors that operate in lighted environments and can encode fine visual details. There are three different kinds (S or blue, M or green and L or red) that are each sensitive to slightly different types of light. Combined, these three types of cones allow you to have color vision.

- Contrast

- Relative difference in the amount and type of light coming from two nearby locations.

- Contrast gain

- Process where the sensitivity of your visual system can be tuned to be most sensitive to the levels of contrast that are most prevalent in the environment.

- Dark adaptation

- Process that allows you to become sensitive to very small levels of light, so that you can actually see in the near-absence of light.

- Lateral inhibition

- A signal produced by a neuron aimed at suppressing the response of nearby neurons.

- Opponent Process Theory

- Theory of color vision that assumes there are four different basic colors, organized into two pairs (red/green and blue/yellow) and proposes that colors in the world are encoded in terms of the opponency (or difference) between the colors in each pair. There is an additional black/white pair responsible for coding light contrast.

- Photoactivation

- A photochemical reaction that occurs when light hits photoreceptors, producing a neural signal.

- Primary visual cortex (V1)

- Brain region located in the occipital cortex (toward the back of the head) responsible for processing basic visual information like the detection, thickness, and orientation of simple lines, color, and small-scale motion.

- Rods

- Photoreceptors that are very sensitive to light and are mostly responsible for night vision.

- Synesthesia

- The blending of two or more sensory experiences, or the automatic activation of a secondary (indirect) sensory experience due to certain aspects of the primary (direct) sensory stimulation.

- Trichromacy theory

- Theory that proposes that all of your color perception is fundamentally based on the combination of three (not two, not four) different color signals.

- Vestibulo-ocular reflex

- Coordination of motion information with visual information that allows you to maintain your gaze on an object while you move.

- What pathway

- Pathway of neural processing in the brain that is responsible for your ability to recognize what is around you.

- Where-and-How pathway

- Pathway of neural processing in the brain that is responsible for you knowing where things are in the world and how to interact with them.

References

- Enns, J. T., & Lleras, A. (2008). New evidence for prediction in human vision. Trends in Cognitive Sciences, 12¸ 327–333.

- Gardner, J. L., Sun, P., Waggoner, R. A. , Ueno, K., Tanaka, K., & Cheng, K. (2005). Contrast adaptation and representation in human early visual cortex. Neuron, 47, 607–620.

- Goodale, M. A., & Milner, A. D. (1992). Separate visual pathways for perception and action. Trends in Neuroscience, 15, 20–25.

- Helmholtz, H. von. (1867). Handbuch der Physiologischen Optik. Leipzig: Leopold Voss.

- Hering, E. (1892). Grundzüge der Lehre vom Lichtsinn. Berlin, Germany: Springer.

- Horikawa, T., Tamaki, M., Miyawaki, Y., & Kamitani, Y. (2013). Neural decoding of visual imagery during sleep. Science, 340(6132), 639–642.

- Hubel, D. H., & Wiesel, T. N. (1962). Receptive fields, binocular interaction, and functional architecture in the cat’s visual cortex. Journal of Physiology, 160, 106–154.

- Hurley, J. B. (2002). Shedding light on adaptation. Journal of General Physiology, 119, 125–128.

- Nirenberg, S., & Pandarinath, C. (2012). Retinal prosthetic strategy with the capacity to restore normal vision. Proceedings of the National Academy of Sciences, 109 (37), 15012–15017.

- Stuart, J. A., & Brige, R. R. (1996). Characterization of the primary photochemical events in bacteriorhodopsin and rhodopsin. In A. G. Lee (Ed.), Rhodopsin and G-protein linked receptors (Part A, Vol. 2, pp. 33–140). Greenwich, CT: JAI.

- Svaetichin, G. (1956). Spectral response curves from single cones, Actaphysiologica Scandinavia, Suppl. 134, 17–46.

- Young, T. (1802). Bakerian lecture: On the theory of light and colours. Philosophical Transaction of the Royal Society London, 92, 12–48.

Authors

Simona BuettiSimona Buetti is a postdoctoral fellow at the University of Illinois, where she teaches sensation and perception. She received a Ph.D. in Psychology (summa cum laude) from the University of Geneva, Switzerland. In 2010, she was awarded a postdoctoral fellowship from the Swiss National Science Foundation to support her postdoctoral work.

Simona BuettiSimona Buetti is a postdoctoral fellow at the University of Illinois, where she teaches sensation and perception. She received a Ph.D. in Psychology (summa cum laude) from the University of Geneva, Switzerland. In 2010, she was awarded a postdoctoral fellowship from the Swiss National Science Foundation to support her postdoctoral work. Alejandro LlerasAlejandro Lleras, Associate Professor of Psychology at the University of Illinois, received a CAREER award for young investigators from the National Science Foundation for his research on perception and visual attention and was named the 2010-2011 Helen Corley Petit Scholar for outstanding achievements as an Assistant Professor.

Alejandro LlerasAlejandro Lleras, Associate Professor of Psychology at the University of Illinois, received a CAREER award for young investigators from the National Science Foundation for his research on perception and visual attention and was named the 2010-2011 Helen Corley Petit Scholar for outstanding achievements as an Assistant Professor.

Creative Commons License

Vision by Simona Buetti and Alejandro Lleras is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License. Permissions beyond the scope of this license may be available in our Licensing Agreement.

Vision by Simona Buetti and Alejandro Lleras is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License. Permissions beyond the scope of this license may be available in our Licensing Agreement.